Real users. Real devices.

Real feedback.

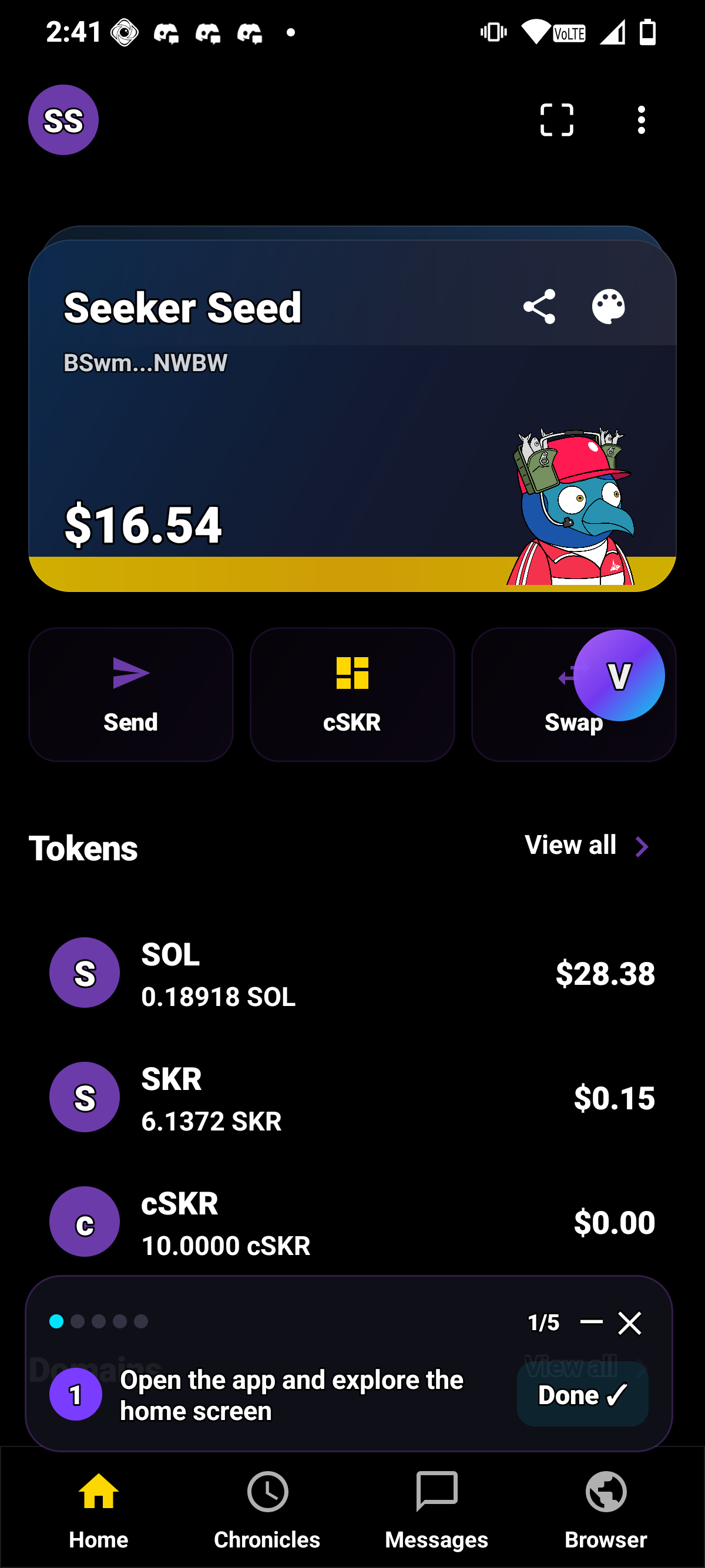

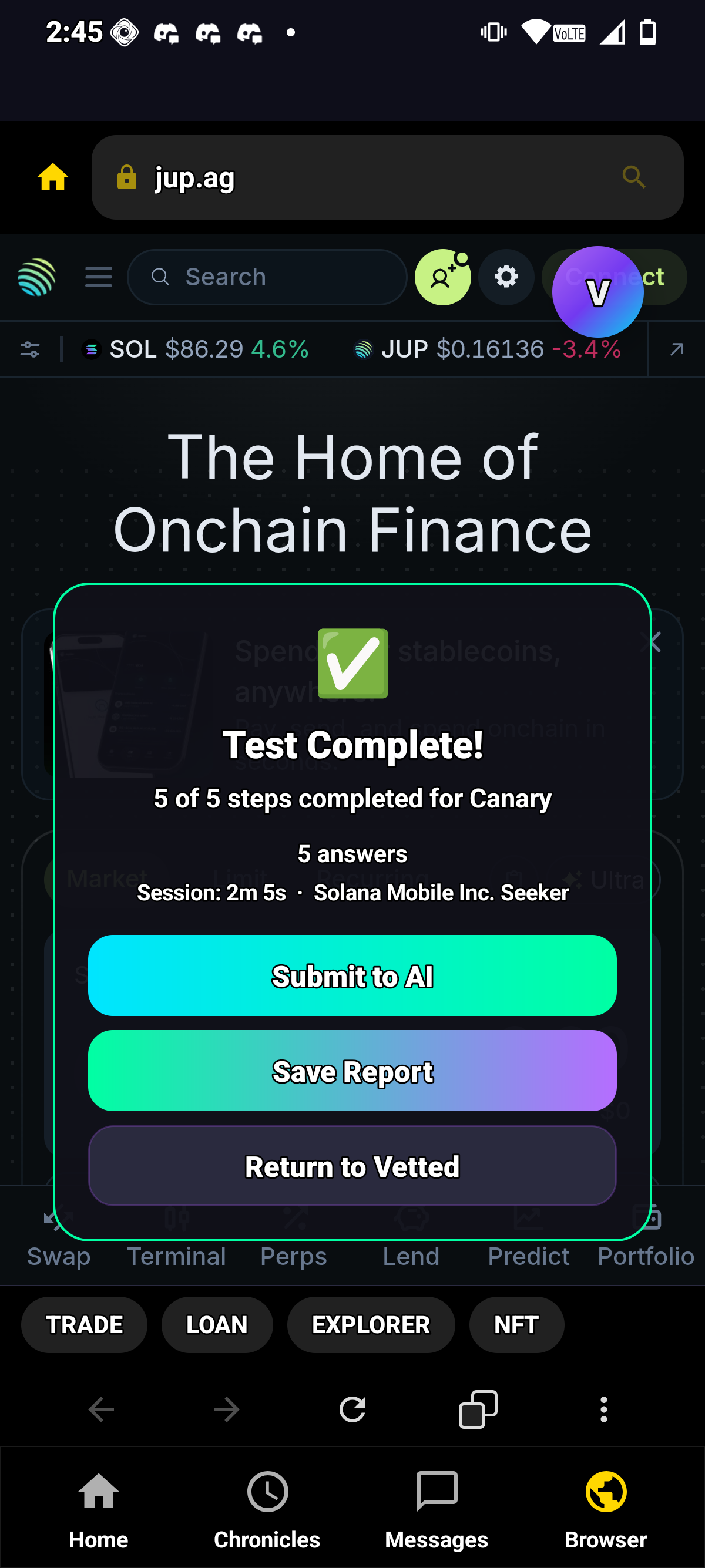

Stop guessing. The SagaDAO community runs your app on real Saga & Seeker devices, end-to-end. SBT-verified testers. Structured walkthroughs. What comes back is pure signal. Ship faster.

Stop guessing. The SagaDAO community runs your app on real Saga & Seeker devices, end-to-end. SBT-verified testers. Structured walkthroughs. What comes back is pure signal. Ship faster.

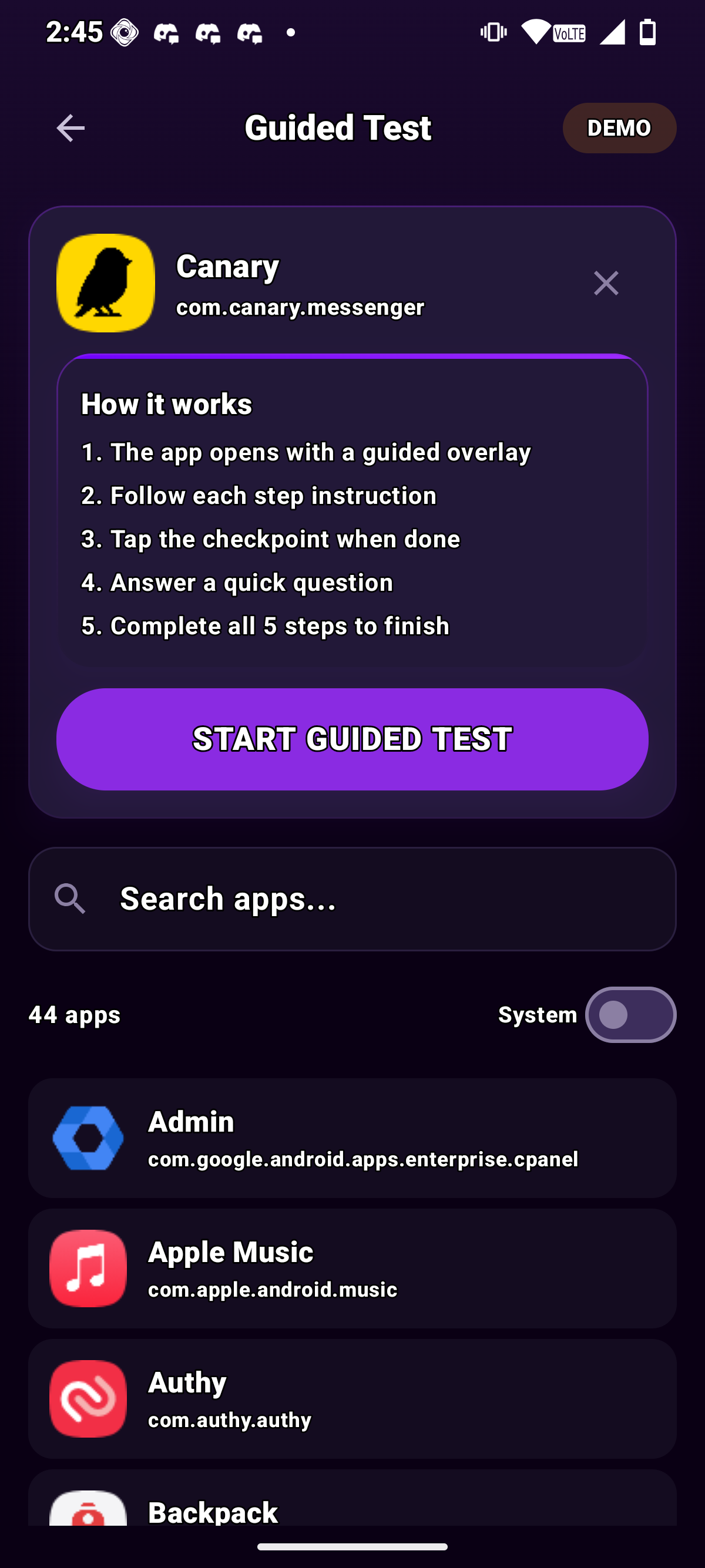

Gated entry. Sequential steps. Low-effort testers get filtered out. What's left is a proven cohort whose feedback you can actually ship on.

"The result is a smaller group of testers, but higher quality feedback that builders can actually use."

· SAGA DAO COREEvery round delivers structured reports from real community input. Not assumptions. Not vibes. Data you can act on.

Submit your app. Set your criteria. Verified device owners run the pipeline. Each step filters harder. What comes back is actionable intel.

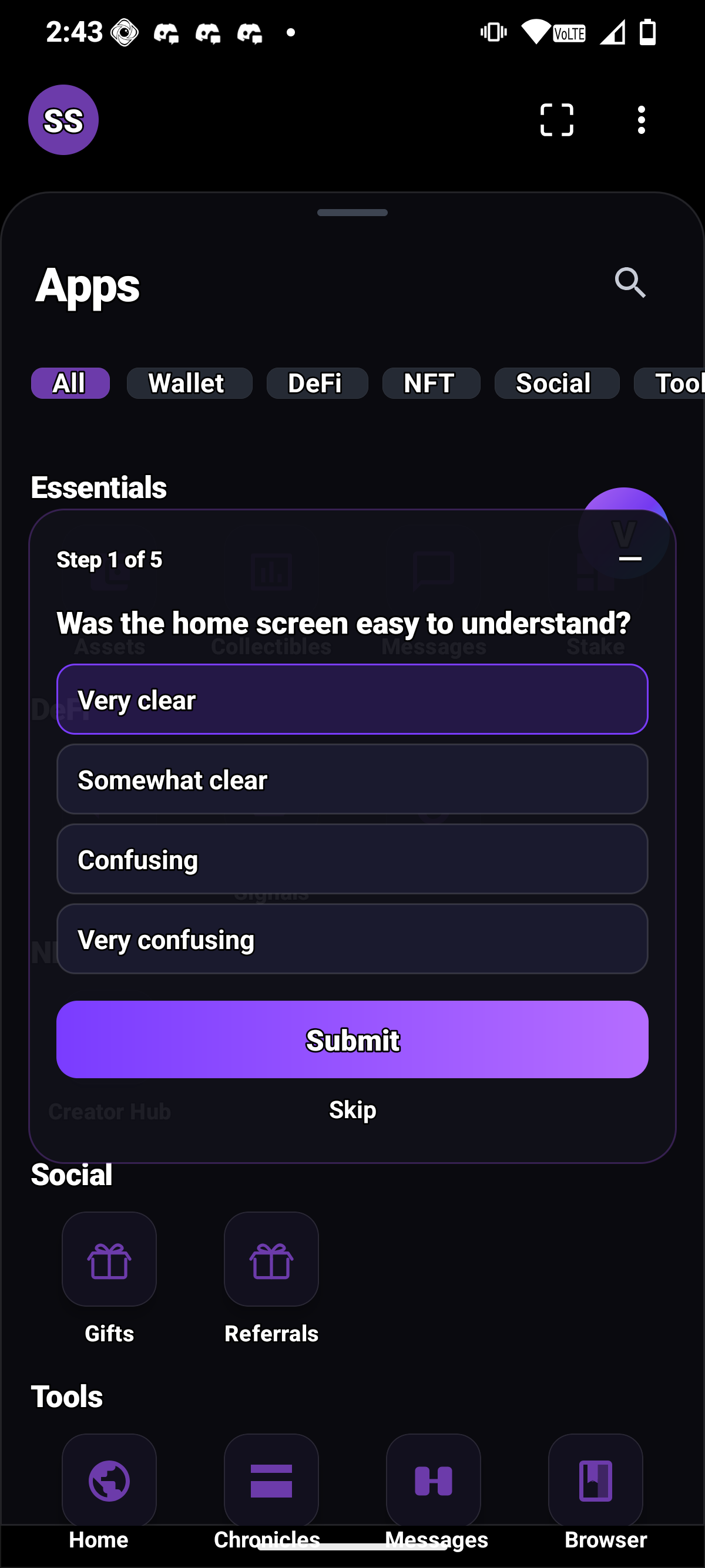

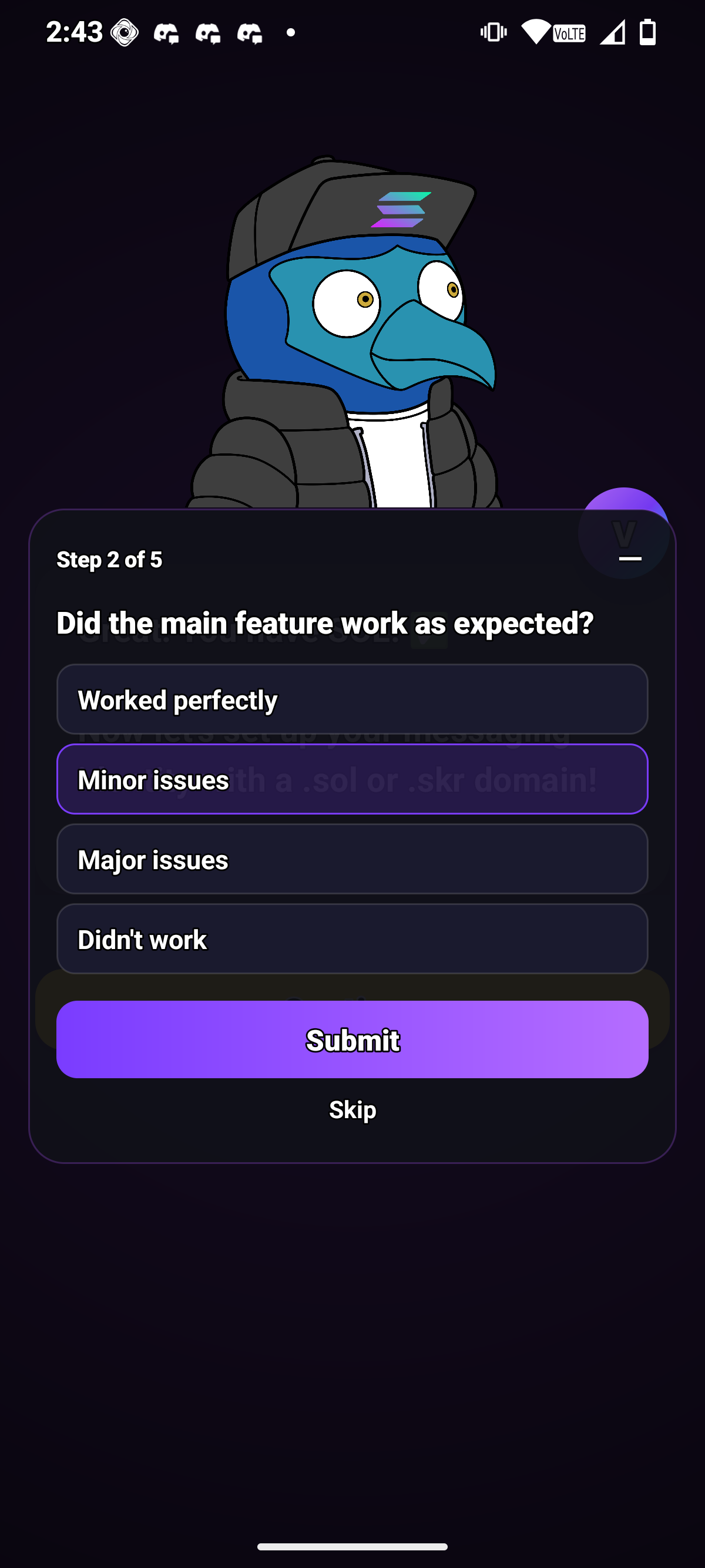

Each stage is completed in order. Every completion includes a small reward or acknowledgment. Not gamification for its own sake, but reinforcement that keeps momentum and reduces dropoff.

"Steps with reinforcement after each stage to keep it digestible, like moving through a three course meal."

· SAGA DAO CORE

The Hub is the social layer of U$ER. A pinned community room sits on top, every live test app gets its own room underneath, and a real-time activity feed shows every step completion, badge, bug report, and rating across the platform.

@bug in any chat and Sentinel files a flag the admins triage from the dashboard. @suggestion routes to the developer. /help for the full list./report and Sentinel files a moderation flag carrying the original message id. Admins see it on the Chat Flags page in the dashboard.Screenshots, feedback, flow events. Analyzed and synthesized into a single consolidated report, with a deterministic fallback when no AI key is configured.

Annotated screenshot uploads get cross-referenced against the raw frame, flagging UI regressions the tester missed. At round close, every feedback entry, issue, flow event, and screenshot gets fed into a single consolidated report synthesizer. Without an AI key configured, the same synthesizer still emits a deterministic report from the raw data so the admin always has something to read.

Every card below points at a real code path in the repo. No vaporware, no roadmap checkboxes.

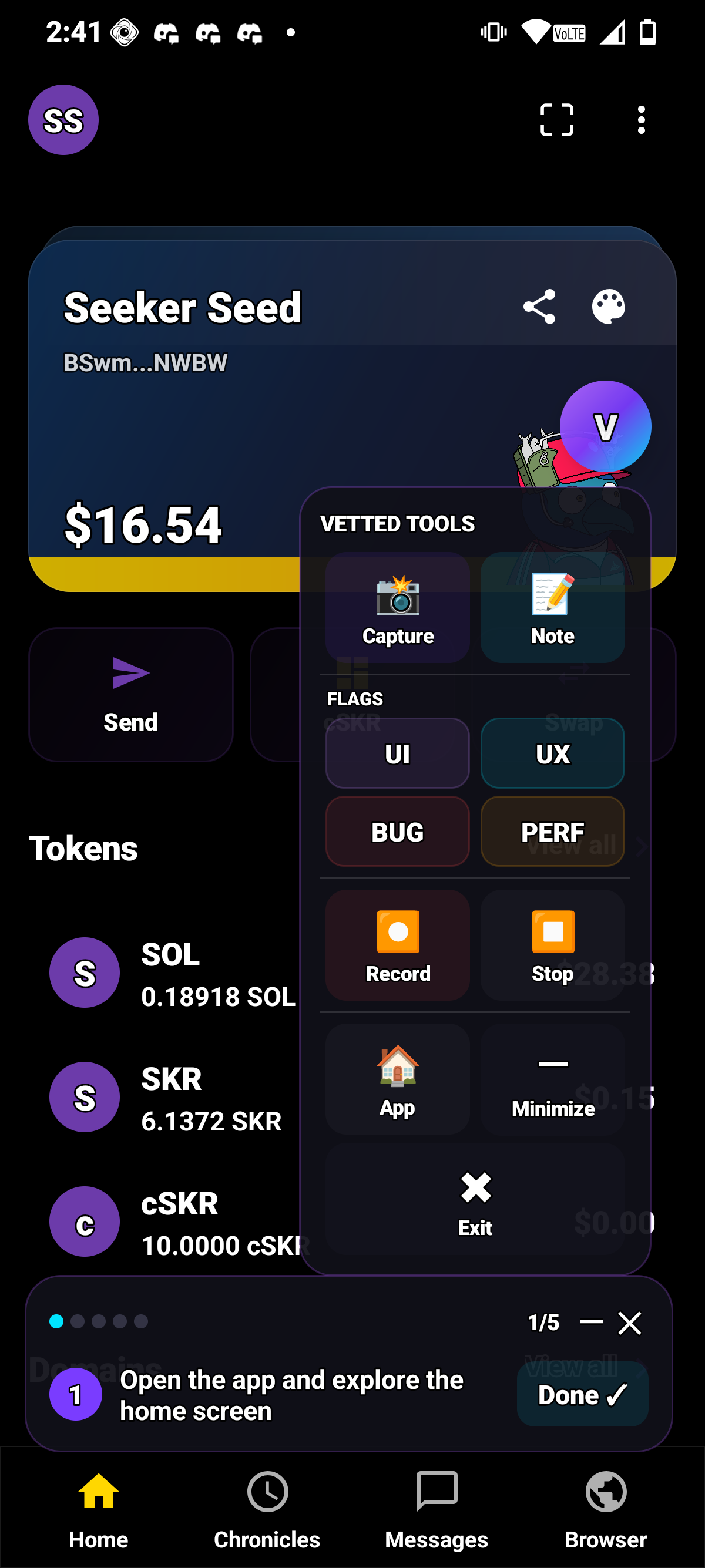

Foreground service surfaces each walkthrough question as a notification action. Testers answer without leaving the target app. Answers sync bidirectionally with the in-app panel.

5 min of no input and the client stops beating. Every beat is clamped server-side so a stale or hostile client can't inflate the counter.

The server refuses to advance a tester until every required question has an answer. On the final step it also refuses if active_seconds < min_test_duration_seconds.

Globals run in every round by default. Customs attach to one submission. The round detail endpoint unions both based on include_global_questions / include_custom_questions, so admins can turn either stream off per round.

Admin drafts a per-tester payout plan, signs each transfer from their connected wallet, and posts the confirmed signature back. The backend records plan + signature. Sent rows are immutable.

Drop-in Android library. Events land next to tester notes in the admin dashboard, so you see crashes and feedback in one place.

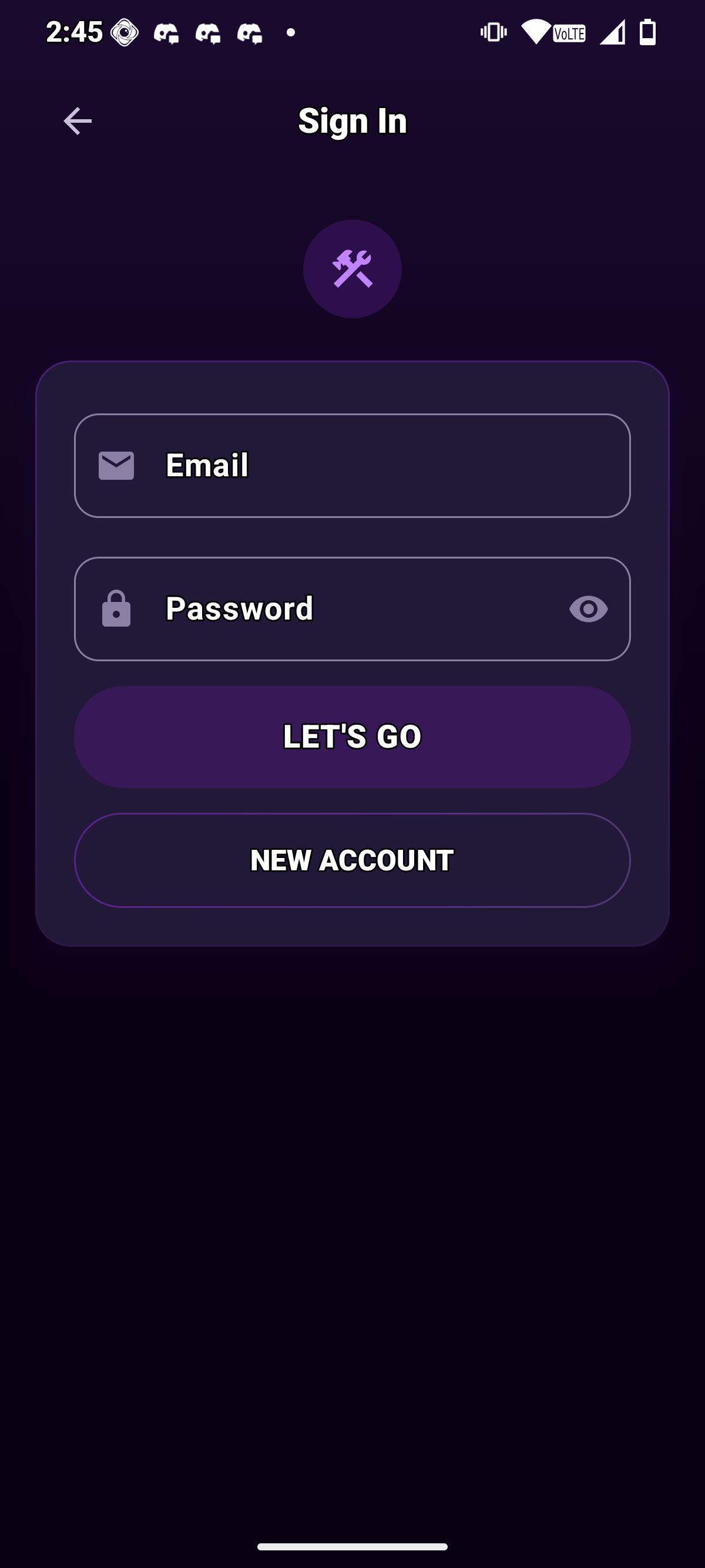

Testers and admins sign in by signing a SIWS message with their Solana wallet. The developer portal supports the same wallet flow with an email fallback for first-time onboarding. Your wallet is your identity across app and web.

With an AI key, the synthesizer writes a narrative consolidated report. Without one, the same code path emits a deterministic report from raw issues, feedback, flow events, and poll distributions.

The tester app cross-references installed packages against active rounds and routes taps into the real flow. Taps on non-requested apps create an ad-hoc session tracked separately and excluded from aggregated reports.

Not every tester is equal. The pipeline filters through effort and proof. Each stage demands more. The testers who make it through are the ones worth building with long-term.

Your app goes in. You define the criteria. Verified hardware owners deliver structured reports back.

Every tester is gated by Saga & Seeker Genesis SBTs. One wallet per profile. Daily snapshots. Fully auditable.

Full round completions. Usable feedback. Real in-app actions. Consistent reliability. That's a super tester.

Drop-in Android telemetry. One init() call. Automatic crash capture, UI load timings, dropped frames, screen views, rate-limited ingest, correlated with live test rounds in the admin dashboard.

Unhandled crashes go out as level=fatal event_type=crash. Opt in to captureScreenViews, capturePerformance, and captureSlowFrames for activity load times and Choreographer frame budgets.

Client-side queue flushes every 15s or every 20 events (configurable). Backend rate-limits per API key with per-minute and per-month quotas.

Five or more occurrences of the same crash fingerprint inside a 1-hour window automatically open an alert in the admin dashboard, routed to the owner email on the key.

Call UserTrack.setUserId(id) and every subsequent event carries user_id, screen_name, and duration_ms as first-class columns the admin SDK panel can filter and sort on.

SDK events from your app surface alongside tester notes and screenshots in the same admin view, so crashes line up with the feedback that caused them.

Tell us what you're building. Saga & Seeker owners run your app through a 5-step walkthrough with global + custom questions. You get the raw submissions plus the AI-assisted summary.

Drop your app in. Verified testers will run it, break it, and tell you exactly what to fix. Backed by proof.